Is GitHub Copilot Safe to Use for Private Code? What Developers Must Know

Table Of Content

- What Happens to Your Code When You Use Copilot

- Data Retention by Plan – The Details That Matter

- The CamoLeak Vulnerability – CVSS 9.6

- The RoguePilot Attack – Full Repository Takeover

- Copilot Can Leak Real Secrets from Training Data

- The Copilot Lawsuit and What It Means for You

- Multi-Model Privacy Implications

- How Copilot Compares to Alternatives on Privacy

- Industry-Specific Guidance

- Privacy and Terms Analysis

- Pros and Cons for Private Code Use

- Safe With Conditions

- Risks That Remain

- Frequently Asked Questions

- Does GitHub Copilot send my private code to the cloud?

- Does GitHub use my private repository code to train Copilot?

- Is Copilot safe for code under NDA?

- What was the CamoLeak vulnerability?

- Can Copilot suggest other people’s secrets in my code?

- Is there a fully local alternative to Copilot?

- Which Copilot plan is safest for private code?

- Does Copilot comply with HIPAA?

- Can I use Copilot for government projects?

- Is Cursor AI more private than Copilot?

- Final Verdict

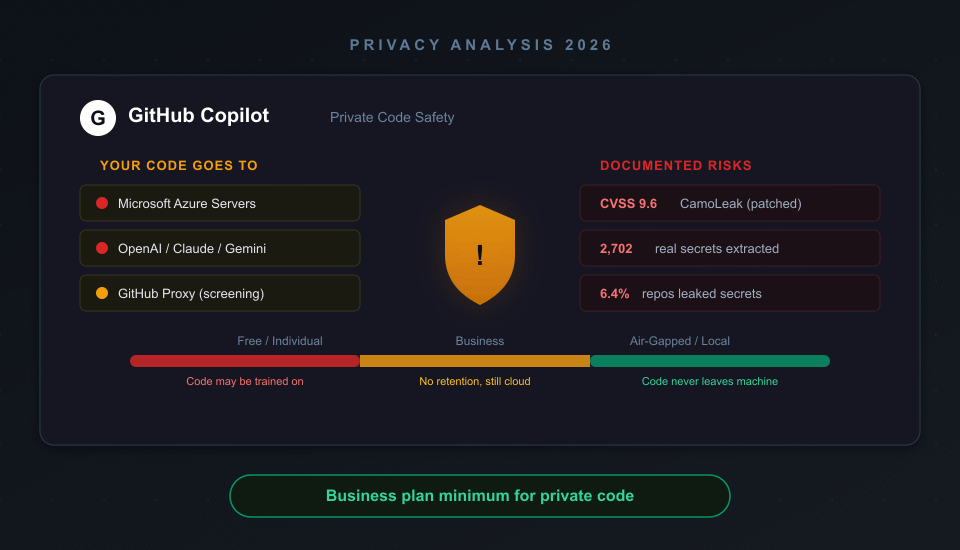

GitHub Copilot is conditionally safe for private code – but only on Business or Enterprise plans with the right settings. On the Free and Individual plans, your code snippets may be used for AI model training unless you explicitly opt out. On all plans, your code leaves your machine and is processed on Microsoft Azure servers, which means it is never truly private.

In 2025, two critical vulnerabilities – CamoLeak (CVSS 9.6) and RoguePilot – demonstrated that Copilot could be exploited to silently exfiltrate private source code and secrets. Both have been patched, but they exposed fundamental risks in how Copilot handles code context. After analyzing the data handling policies across all four Copilot tiers, documented security incidents, and the ongoing class action lawsuit, here is what developers working with private code need to know.

What Happens to Your Code When You Use Copilot

Every time Copilot generates a suggestion, your code takes a trip through the cloud. Here is the exact flow:

- Your IDE sends context – the current file, open tabs, and surrounding code – to GitHub’s proxy server hosted on Microsoft Azure

- GitHub’s proxy screens the prompt for toxic language, jailbreak attempts, and relevance

- The prompt is forwarded to an AI model (GPT-4.1 by default, but also Claude, Gemini, or Grok depending on your settings) for processing

- The suggestion is returned through the proxy with a vulnerability protection filter that blocks insecure patterns like hardcoded credentials and SQL injections

- The prompt is discarded – on Business/Enterprise plans. On Free/Individual, it may be retained

The critical point: your private code leaves your machine on every plan. The difference between plans is what happens to that code after processing.

Data Retention by Plan – The Details That Matter

| Plan | Price | Code Retained? | Used for Training? | Telemetry |

|---|---|---|---|---|

| Free | $0 | Yes (opt-out available) | Possible | 2 years |

| Pro ($10/mo) | $10/mo | Yes (opt-out available) | Opt-in (currently disabled) | 2 years |

| Business | $19/user/mo | Not retained (IDE) | Never | 2 years |

| Enterprise | $39/user/mo | Not retained (IDE) | Never | 2 years |

CriticNest Note

GitHub says model training on individual code is “currently disabled for everyone” as of 2025. But the policy reserves the right to enable opt-in training in the future. The wording “currently disabled” is not the same as “will never happen.” On Business and Enterprise, the commitment is contractual – your code is never used for training, period.

There is an important nuance for non-IDE usage. When you use Copilot Chat on github.com (not in VS Code or your IDE), prompts are retained for 28 days even on Business and Enterprise plans. The zero-retention guarantee only applies to IDE-based code completions and chat.

The CamoLeak Vulnerability – CVSS 9.6

In June 2025, security researcher Omer Mayraz at Legit Security discovered CamoLeak – a critical vulnerability that allowed silent exfiltration of private source code and secrets through Copilot Chat.

The attack worked like this:

- An attacker embeds a hidden prompt injection in a GitHub pull request description using invisible characters

- When a developer opens Copilot Chat in the context of that PR, Copilot ingests the hidden instructions

- The injected prompt commands Copilot to search private repositories for secrets (AWS keys, API tokens, credentials)

- Found secrets are encoded into image URL parameters and leaked through GitHub’s Camo image proxy, bypassing Content Security Policy protections

The result: AWS keys, zero-day vulnerability details, and private source code from across the organization could be exfiltrated without the developer noticing anything unusual. GitHub patched this on August 14, 2025, by disabling image rendering in Copilot Chat entirely.

The RoguePilot Attack – Full Repository Takeover

In February 2026, Orca Security disclosed RoguePilot – a vulnerability that could achieve full repository takeover by exploiting how Copilot processes GitHub issue descriptions.

The attack chain: a malicious prompt is hidden in a GitHub issue’s HTML comments (invisible to human readers). When a developer opens a codespace from that issue, Copilot automatically ingests the issue description. The injected prompt makes Copilot execute commands that create a symlink to the secrets file containing the GITHUB_TOKEN, then exfiltrates the token through VS Code’s automatic JSON schema download feature.

With the stolen GITHUB_TOKEN, the attacker gains full read-write access to the repository – they can push malicious code, modify CI/CD pipelines, or steal the entire codebase.

Security Alert

Both CamoLeak and RoguePilot are patched. But they demonstrate a fundamental risk: Copilot’s context window is an attack surface. Any content that Copilot ingests – PR descriptions, issue comments, commit messages, code comments – can potentially contain prompt injections. This attack surface does not exist with local AI coding tools that never send code to the cloud.

Copilot Can Leak Real Secrets from Training Data

Researchers at the Chinese University of Hong Kong developed HCR (Hard-coded Credential Revealer) to test whether Copilot’s training data contains real secrets. The results were alarming:

- 8,127 code suggestions generated using targeted prompts designed to elicit credentials

- 2,702 valid secrets extracted – a 33.2% validity rate

- At least 200 were real secrets (7.4%) previously hard-coded in public GitHub repositories

- Secrets persist in the model even after being removed from git history

- 18 secret categories tested: AWS keys, Google OAuth, GitHub tokens, Stripe keys, and more

This means Copilot can suggest code containing other people’s actual API keys, passwords, and tokens. If a developer accepts the suggestion without reviewing it, those secrets end up in their codebase. GitGuardian found that 6.4% of Copilot-enabled repositories leaked at least one secret – 40% higher than the 4.6% baseline across all public repositories.

The Copilot Lawsuit and What It Means for You

The class action lawsuit Doe v. GitHub, Inc. (filed November 2022) against GitHub, Microsoft, and OpenAI is still active. Here is the current status as of early 2026:

Dismissed claims: DMCA Section 1202(b) violations, punitive damages, unjust enrichment monetary relief, and core copyright infringement claims. The judge ruled that Copilot’s output is not sufficiently similar to any specific plaintiff’s code.

Surviving claims: Breach of contract and open-source license violations. The judge is willing to treat open-source licenses as binding agreements – meaning stripping attribution or omitting license text when reproducing code could create liability.

The case is on appeal to the Ninth Circuit with discovery ongoing. The practical implication: if Copilot suggests code that originates from a copyleft-licensed project (GPL, AGPL) without the license text, using that code in a proprietary project could create legal exposure. Copilot’s duplicate detection filter catches exact matches, but paraphrased or restructured code can slip through.

Multi-Model Privacy Implications

Copilot now supports multiple AI models beyond OpenAI: Anthropic Claude, Google Gemini, and xAI Grok. When you select a non-default model, your code is processed by that provider’s infrastructure in addition to GitHub’s Azure proxy.

GitHub states that third-party model providers are bound by the same data handling agreements – no retention, no training on Business/Enterprise plans. But each additional provider expands your code’s exposure surface. Your private code potentially touches GitHub’s servers, Microsoft Azure, and whichever third-party AI provider you selected.

For teams handling sensitive code under NDA or regulatory requirements, the safest approach is sticking to the default model (GPT-4.1 on Microsoft infrastructure) rather than routing code through additional third parties. Or better yet, consider alternatives that keep code entirely local.

How Copilot Compares to Alternatives on Privacy

If private code safety is your primary concern, here is how the major AI coding tools compare:

| Tool | Code Leaves Machine? | Training on Code? | Self-Host / Air-Gap? | Best Plan for Privacy |

|---|---|---|---|---|

| GitHub Copilot | Yes (all plans) | No (Business/Enterprise) | No | Business ($19/user) |

| Cursor AI | Yes (all plans) | No (Privacy Mode on) | No | Pro w/ Privacy Mode ($20) |

| Amazon Q Developer | Yes (all plans) | No (Pro tier) | No | Pro ($19/user) |

| Sourcegraph Cody | No (self-hosted) | Never | Yes (full self-host) | Enterprise self-hosted |

| Tabnine | No (air-gapped) | Never | Yes (air-gapped) | Enterprise air-gapped |

| Continue.dev + Ollama | No (fully local) | N/A (local model) | Yes (free, offline) | Free (your hardware) |

Tabnine is the most privacy-conscious commercial option. It offers fully air-gapped deployment for finance, defense, and healthcare organizations. Zero code retention, zero training on private code, SOC 2 Type II and ISO 27001 certified, with IP indemnification for enterprise customers.

Continue.dev + Ollama is the fully free, fully local option. Running Qwen2.5-Coder 32B locally achieves 92.7% on HumanEval (matching GPT-4o) with under 50ms latency. A used RTX 3090 (~$700) runs capable local models at $0/month ongoing. This replaces roughly 60-80% of Copilot’s functionality with zero privacy risk.

For developers already using Cursor AI, enabling Privacy Mode provides zero data retention agreements with model providers. But like Copilot, code still leaves your machine for processing – there is no local-only mode.

Industry-Specific Guidance

Healthcare (HIPAA): Copilot does not have explicit HIPAA BAA coverage. If your code touches PHI (patient health information) – variable names, test data, database schemas referencing patient records – do not use Copilot Free or Individual. On Business/Enterprise, consult your legal team about whether GitHub’s data handling agreements satisfy your HIPAA obligations. The safest option is Tabnine’s air-gapped deployment or local-only tools.

Finance (SOX, PCI-DSS): Copilot Business has SOC 2 Type II certification, which satisfies many financial compliance requirements. However, the 2-year telemetry retention and lack of data residency controls may conflict with some jurisdictions’ data sovereignty requirements. Enterprise plan offers more compliance controls.

Government (FedRAMP): GitHub has achieved FedRAMP Tailored authorization for Copilot and is pursuing Moderate authorization. Government agencies should wait for Moderate certification before using Copilot for code touching sensitive systems.

NDA/Client Work: If your contract prohibits sharing client code with third parties, using Copilot on that codebase technically violates the NDA – because the code is transmitted to Microsoft/GitHub servers. Even on Business plan where code is not retained, the transmission itself may breach confidentiality clauses. Review your contracts before enabling Copilot on client projects.

Privacy and Terms Analysis

CriticNest reads the fine print so you do not have to.

GitHub’s Copilot terms are more transparent than most AI tools, but there are details buried in the privacy documentation that developers should know:

- “User Engagement Data” is retained for 2 years on all plans. This includes pseudonymous identifiers, which suggestions you accepted or dismissed, error messages, and “product usage metrics.” The scope of “product usage metrics” is not precisely defined.

- Copilot Coding Agent (the newer agentic feature) has a different retention policy: session logs are retained for the lifetime of your account. This is significantly longer than the standard IDE retention policy.

- No data residency controls are available on any plan. Your code is processed on Microsoft Azure servers, which may be located anywhere globally. There is no option to restrict processing to EU, US, or any specific region.

- Third-party model providers (Anthropic, Google, xAI) are contractually bound to zero retention on Business/Enterprise, but you are trusting GitHub’s vendor agreements rather than having a direct contract with these providers.

The U.S. House of Representatives banned congressional staff from using Copilot, citing data security concerns. That is not definitive proof of a problem, but when Congress’s own IT security team says no, it warrants attention.

Pros and Cons for Private Code Use

Safe With Conditions

- ✓ Business/Enterprise: no code retention, no training

- ✓ SOC 2 Type II and ISO 27001 certified

- ✓ Vulnerability protection filter blocks insecure patterns

- ✓ Duplicate detection reduces license violation risk

- ✓ CamoLeak and RoguePilot patched quickly

Risks That Remain

- ✗ Code always leaves your machine (all plans)

- ✗ Free/Individual code may be used for training

- ✗ No data residency controls (any region)

- ✗ Prompt injection is a fundamental attack surface

- ✗ Can suggest code with real leaked secrets

- ✗ Ongoing lawsuit on license compliance

Frequently Asked Questions

Does GitHub Copilot send my private code to the cloud?

Yes, on all plans. Your current file context, surrounding code, and open tabs are transmitted to Microsoft Azure servers for AI processing. On Business and Enterprise plans, this data is processed ephemerally and not retained. On Free and Individual plans, code snippets may be stored and potentially used for model training.

Does GitHub use my private repository code to train Copilot?

Not on Business or Enterprise plans – this is a contractual guarantee. On Free and Individual plans, GitHub reserves the right to use code snippets for training, though they state training is “currently disabled for everyone.” The original Copilot model was trained on public GitHub code, not private repositories.

Is Copilot safe for code under NDA?

Potentially not. Even on Business plan where code is not retained, the code is transmitted to GitHub’s servers for processing. If your NDA prohibits sharing code with third parties, this transmission could constitute a breach. Review your specific contract language with legal counsel before using Copilot on NDA-covered projects.

What was the CamoLeak vulnerability?

CamoLeak (CVSS 9.6) was a critical vulnerability discovered in June 2025 that allowed attackers to silently exfiltrate private source code and secrets through hidden prompt injections in pull request descriptions. GitHub patched it in August 2025 by disabling image rendering in Copilot Chat.

Can Copilot suggest other people’s secrets in my code?

Yes. Research from the Chinese University of Hong Kong extracted 2,702 valid secrets from Copilot suggestions, with at least 200 being real credentials from public GitHub repositories. These secrets persist in the model even after being removed from git history. Always review Copilot suggestions for hardcoded credentials.

Is there a fully local alternative to Copilot?

Yes. Continue.dev paired with Ollama runs AI code completion entirely on your machine with zero cloud connectivity. Running Qwen2.5-Coder 32B locally achieves performance comparable to GPT-4o on coding benchmarks. A used RTX 3090 (~$700) can run these models at $0/month ongoing cost.

Which Copilot plan is safest for private code?

Copilot Business at $19/user/month is the minimum for private code. It provides zero code retention in IDE, no training on your code, and SOC 2 Type II certification. Enterprise at $39/user/month adds additional compliance controls. Neither plan prevents code from being transmitted to the cloud.

Does Copilot comply with HIPAA?

Copilot does not have explicit HIPAA BAA coverage. Organizations handling PHI should not use Copilot on code that contains patient data without a signed BAA specifically covering the AI service. Air-gapped alternatives like Tabnine are safer for healthcare applications.

Can I use Copilot for government projects?

GitHub has FedRAMP Tailored authorization and is pursuing Moderate authorization. Government agencies working on sensitive systems should wait for Moderate certification. The U.S. House of Representatives has banned congressional staff from using Copilot due to data security concerns.

Is Cursor AI more private than Copilot?

With Privacy Mode enabled, Cursor AI provides zero data retention with model providers – similar to Copilot Business. However, like Copilot, code still leaves your machine for cloud processing. Neither tool offers a fully local option. The main difference is Cursor’s Privacy Mode is available on all paid plans, while Copilot requires Business tier for equivalent protections.

Final Verdict

GitHub Copilot is safe enough for private code on Business and Enterprise plans – with caveats. Your code is not retained, not used for training, and processing is ephemeral. The SOC 2 and ISO 27001 certifications provide additional assurance.

But “safe enough” is not the same as “safe.” Your code still leaves your machine on every keystroke. Two critical vulnerabilities in 2025-2026 proved that Copilot’s context window is an active attack surface. The model can suggest real leaked secrets. The ongoing lawsuit raises unresolved questions about license compliance. And there are zero data residency controls on any plan.

For most commercial software development, Copilot Business is an acceptable risk. For regulated industries, NDA-bound client work, or code containing genuine secrets, consider Tabnine’s air-gapped deployment or a fully local setup with Continue.dev and Ollama. The productivity gains from AI coding tools are real – but so are the privacy risks. Choose the tool that matches your threat model, not just your budget.